- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- « Previous

-

- 1

- 2

- Next »

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

Yeah, I have actually been thinking of ditching the EDAs as of all of the machines I have, these suck.

I do have the drives from the EDA in an external enclosure right now running ReclaimMe and all of the data seems to be there. So it seems that the data is there, I just need to figure out how to get these to show back up in the UI.

I really wish I knew how netgear rebuilt the partitions.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

@StephenB wrote:Tagging the mods ( @Marc_V and @JeraldM ) to see if they can help with the support aspect. They used to offer per-incident support, but now you need to purchase a 12-month support contract.

Where do you find that 12 month support contract? There's a mention of support on their page but when you click on it, it just sends you to the login page.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

With EDA1 connected:

# mdadm --assemble --scan

mdadm: /dev/md/eda1-4 assembled from 1 drive - not enough to start the array.

mdadm: /dev/md/eda1-3 assembled from 1 drive - not enough to start the array.

mdadm: /dev/md/eda1-2 assembled from 1 drive - not enough to start the array.

mdadm: /dev/md/eda1-1 assembled from 1 drive - not enough to start the array.

mdadm: /dev/md/eda1-0 assembled from 1 drive - not enough to start the array.

mdadm: /dev/md/0 has been started with 6 drives (out of 7).

mdadm: /dev/md/data-3 has been started with 6 drives.

mdadm: /dev/md/data-2 has been started with 6 drives.

mdadm: /dev/md/data-1 has been started with 6 drives.

mdadm: /dev/md/data-0 has been started with 6 drives.

mdadm: /dev/md/1 has been started with 6 drives.

mdadm: /dev/md/eda1-4 exists - ignoring

mdadm: /dev/md123 assembled from 1 drive - not enough to start the array.

mdadm: /dev/md/eda1-3 exists - ignoring

mdadm: /dev/md123 assembled from 1 drive - not enough to start the array.

mdadm: /dev/md/eda1-2 exists - ignoring

mdadm: /dev/md123 assembled from 1 drive - not enough to start the array.

mdadm: /dev/md/eda1-1 exists - ignoring

mdadm: /dev/md123 assembled from 1 drive - not enough to start the array.

mdadm: /dev/md/eda1-0 exists - ignoring

mdadm: /dev/md123 assembled from 1 drive - not enough to start the array.

mdadm: Found some drive for an array that is already active: /dev/md/0

mdadm: giving up.

#

#

# cat /proc/mdstat

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md1 : active raid10 sda2[0] sdf2[5] sde2[4] sdd2[3] sdc2[2] sdb2[1]

1566720 blocks super 1.2 512K chunks 2 near-copies [6/6] [UUUUUU]

md124 : active raid5 sda3[6] sdf3[11] sde3[10] sdd3[9] sdc3[8] sdb3[7]

14627073280 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU ]

md125 : active raid5 sda4[6] sdf4[11] sde4[10] sdd4[8] sdc4[9] sdb4[7]

4883113920 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md126 : active raid5 sda5[5] sdf5[6] sde5[9] sdc5[7] sdd5[10] sdb5[8]

9766864320 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md127 : active raid5 sda6[0] sdd6[5] sdc6[4] sde6[6] sdb6[2] sdf6[1]

9766864640 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md0 : active raid1 sda1[17] sdb1[16] sdc1[18] sdd1[19] sde1[20] sdf1[21]

4192192 blocks super 1.2 [7/6] [U_UUUUU]

[=====>...............] resync = 25.9% (1088576/4192192) finish=0.9min sp eed=51836K/sec

unused devices: <none>

With the other EDA connected:

# mdadm --assemble --scan

mdadm: /dev/md/eda3-0 has been started with 5 drives.

mdadm: device 14 in /dev/md/0 has wrong state in superblock, but /dev/sdk1 seems ok

mdadm: device 15 in /dev/md/0 has wrong state in superblock, but /dev/sdj1 seems ok

mdadm: device 16 in /dev/md/0 has wrong state in superblock, but /dev/sdi1 seems ok

mdadm: device 17 in /dev/md/0 has wrong state in superblock, but /dev/sdh1 seems ok

mdadm: /dev/md/0 has been started with 6 drives (out of 7) and 4 spares.

mdadm: /dev/md/data-3 has been started with 6 drives.

mdadm: /dev/md/data-2 has been started with 6 drives.

mdadm: /dev/md/data-1 has been started with 6 drives.

mdadm: /dev/md/data-0 has been started with 6 drives.

mdadm: /dev/md/1 has been started with 6 drives.

mdadm: Found some drive for an array that is already active: /dev/md/0

mdadm: giving up.

# cat /proc/mdstat

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md1 : active raid10 sda2[0] sdf2[5] sde2[4] sdd2[3] sdc2[2] sdb2[1]

1566720 blocks super 1.2 512K chunks 2 near-copies [6/6] [UUUUUU]

md123 : active raid5 sda3[6] sdf3[11] sde3[10] sdd3[9] sdc3[8] sdb3[7]

14627073280 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md124 : active raid5 sda4[6] sdf4[11] sde4[10] sdd4[8] sdc4[9] sdb4[7]

4883113920 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md125 : active raid5 sda5[5] sdf5[6] sde5[9] sdc5[7] sdd5[10] sdb5[8]

9766864320 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md126 : active raid5 sda6[0] sdd6[5] sdc6[4] sde6[6] sdb6[2] sdf6[1]

9766864640 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md0 : active raid1 sda1[17] sdh1[8](W) sdi1[9](W)(S) sdj1[10](W)(S) sdk1[11](W)(S) sdb1[16] sdc1[18] sdd1[19] sde1[20] sdf1[21]

4192192 blocks super 1.2 [7/6] [U_UUUUU]

resync=DELAYED

md127 : active raid5 sdg3[0] sdk3[4] sdj3[3] sdi3[2] sdh3[1]

39046344448 blocks super 1.2 level 5, 64k chunk, algorithm 2 [5/5] [UUUUU]

[>....................] resync = 0.0% (638788/9761586112) finish=6621.3min speed=24568K/sec

unused devices: <none>- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

I put both of the EDAs into an enclosure and with Reclaime all the data is there and not corrupted.

So I think the first thing I am going to do is:

- 1 Let it scan which looks like it's going to take a few weeks to do.

- 2 Then I'll copy off all of the data that got saved since the last backup. That's not a lot.

- 3 Then I'll copy off all of the data just to be extra careful.

- 4 Then I'll repeat for the next EDA

Once I have all of that, then I'll just reformat them and restore from backup and reclaime.

But since I am only doing one EDA at a time, I'd like to see if there's a way to just fix this while that process so that I can save a TON of time.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

As I said earlier, you likely need --force with eda1. I suggest working with only one EDA500 connected at a time.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

@StephenB wrote:As I said earlier, you likely need --force with eda1. I suggest working with only one EDA500 connected at a time.

So for each of those outputs, I had only one EDA at a time...

And about the use of the --force... do you think that that would mess data up given that it seems accessible via reclaime? Or should I give that a shot on one while I'm reclaiming the other?

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

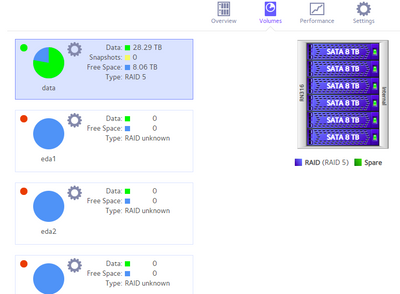

Reason why I am asking is that even on the one EDA that assembled in the screenshot above, it still shows no data.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

@joe_schmo wrote:

And about the use of the --force... do you think that that would mess data up given that it seems accessible via reclaime? Or should I give that a shot on one while I'm reclaiming the other?

To some degree this depends on the consequences of data loss, and how much you are prepared to spend on recovery.

I am thinking that the disks in the array are somewhat out of sync. There might be some some data corruption, since an out-of-sync array means that writes have somehow been lost. So there is some risk with --force.

You could alternatively

- offload the data with ReclaiMe, and then recreate the array. That would be time consuming, and it would require a lot of storage.

- clone all the disks - requiring you to purchase five 10 TB drives. You could then attempt to manually assemble the array using the clones. Also a fair amount of work, but if successful would eliminate the need to recreate the array and reload all the data.

- find a data recovery service (or find a way a path to Netgear's recovery service).

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

So for one of the EDAs I am going to go the reclaim route. I have enough drives for that.

If I unplug the EDAs during reboot, the data on the NAS itself is fine. It only shows up messed up if EDA is connected during boot.

So on the connected EDA that I am trying to get back at the moment EDA2

md123 : active raid5 sdg3[0] sdk3[4] sdj3[3] sdi3[2] sdh3[1]

39046344448 blocks super 1.2 level 5, 64k chunk, algorithm 2 [5/5] [UUUUU]

[>....................] resync = 0.0% (8659028/9761586112) finish=6192.0min speed=26250K/sec

root@NAS:~# mdadm --stop /dev/md123

mdadm: Cannot get exclusive access to /dev/md123:Perhaps a running process, moun ted filesystem or active volume group?

root@NAS:~# umount /dev/md123

root@NAS:~# mdadm --stop /dev/md123

mdadm: stopped /dev/md123

root@NAS:~# mdadm --assemble /dev/md123

mdadm: /dev/md123 not identified in config file.

root@NAS:~# mdadm --assemble --scan

mdadm: /dev/md/eda3-0 has been started with 5 drives.

mdadm: Found some drive for an array that is already active: /dev/md/0

mdadm: giving up.

mdadm: Found some drive for an array that is already active: /dev/md/0

mdadm: giving up.

root@NAS:~# cat /proc/mdstat

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md123 : active raid5 sdg3[0] sdk3[4] sdj3[3] sdi3[2] sdh3[1]

39046344448 blocks super 1.2 level 5, 64k chunk, algorithm 2 [5/5] [UUUUU]

[>....................] resync = 0.0% (8659028/9761586112) finish=6192.0min speed=26250K/sec

md124 : active raid5 sda6[0] sdd6[5] sdc6[4] sde6[6] sdb6[2] sdf6[1]

9766864640 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md125 : active raid5 sda5[5] sdf5[6] sde5[9] sdc5[7] sdd5[10] sdb5[8]

9766864320 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md126 : active raid5 sda4[6] sdf4[11] sde4[10] sdd4[8] sdc4[9] sdb4[7]

4883113920 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md127 : active raid5 sda3[6] sdf3[11] sde3[10] sdd3[9] sdc3[8] sdb3[7]

14627073280 blocks super 1.2 level 5, 64k chunk, algorithm 2 [6/6] [UUUUUU]

md0 : active raid1 sda1[17] sdj1[10](W)(S) sdk1[11](W)(S) sdb1[16] sdc1[18] sdd1[19] sde1[20] sdf1[21] sdi1[9](W)

4192192 blocks super 1.2 [7/7] [UUUUUUU]

md1 : active raid10 sda2[0] sdf2[5] sde2[4] sdd2[3] sdc2[2] sdb2[1]

1566720 blocks super 1.2 512K chunks 2 near-copies [6/6] [UUUUUU]

root@NAS:~# cat /proc/mdstat

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md123 : active raid5 sdg3[0] sdk3[4] sdj3[3] sdi3[2] sdh3[1]

39046344448 blocks super 1.2 level 5, 64k chunk, algorithm 2 [5/5] [UUUUU]

[>....................] resync = 0.1% (10726372/9761586112) finish=6214.8min speed=26148K/sec

Somehow the system now things EDA2 is now called EDA3. Not sure if that is part of the problem or just a side effect.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

So while I still hold out hope that I'll figure out how to make the NAS see the 2 EDAs again.

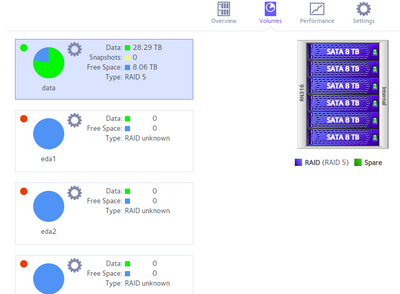

If I decide to wipe them and then start from scratch, how do I get ride of the 3 that show up here:

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

@joe_schmo wrote:If I decide to wipe them and then start from scratch, how do I get ride of the 3 that show up here:

You can click on the settings wheel next to each inactive volume, and destroy it. Then create a new volume.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

I can't see the attachment. Are the three you are talking about three from one EDA that should be one? If that's the case, they aren't really volumes that the OS recognizes, and deleting the ones that aren't "real" might cause a problem. On mine, I booted the NAS without the offending EDA and it then showed one "missing" volume, which I deleted. I then wiped all the partitions from the drives so the NAS could start with them from scratch.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

Right now, I'm not deleting anything while I grab data off of them via reclaimme.

Then I will try recovering them through mdadm.

And if that finally doesn't work, I'll rebuild from backup/reclaim.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

Contacting ReadyNAS support via Email will the best option if you want to seek assistance with them. You can send an email to readynassupport@netgear.com. Data Recovery may apply but no support contract is available for ReadyNAS devices.

HTH

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

@Marc_V wrote:

Contacting ReadyNAS support via Email will the best option if you want to seek assistance with them. You can send an email to readynassupport@netgear.com. Data Recovery may apply but no support contract is available for ReadyNAS devices.

HTH

Hi @Marc_V

When did that change? I remember back in 2018/19 I was able to spend $150 for a support case.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN316 Upgraded Firmware, EDA500 Volumes are messed up

Just this year, ReadyNAS will get support via Email and no contracts will be available anymore.

- « Previous

-

- 1

- 2

- Next »