- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

RN104: Disk failed, replaced, resync, data still degraded, NAS reboot = repeated resync problem

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

RN104: Disk failed, replaced, resync, data still degraded, NAS reboot = repeated resync problem

Hello everyone Michał from Poland here,

I have a RN104 with 2x 4TB WD drives (initially 2x WD Green [Desktop i know]).

Recently one of the drives has been failing, so i replaced it with a 4TB WD Red, everything was going fine, few hours of resync and done.

Now i have 2 problems:

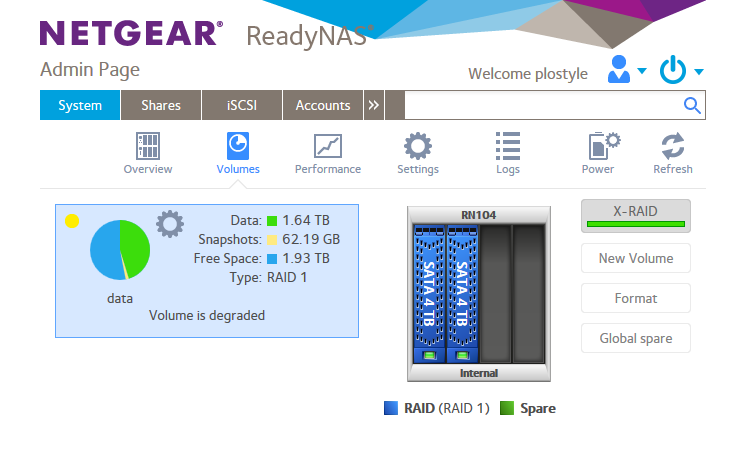

- Both disk show a green light, but the data is still degraded. Any ideas what should i do to bring it back to normal?

- After resync has completed - i have restarted the NAS (3 times now) - after the reboot - data resync starts over again (it takes a few hours).

I have searched the forums and google for an answer but no luck... maybe somebody can help me out with my problems. Below are the recent logs, and a screnshot:

15 Jan 2019 01:00:18 Volume: Volume data is Degraded. 15 Jan 2019 00:44:01 Volume: The resync operation finished on volume data. However, the volume is still degraded. 15 Jan 2019 00:43:51 Disk: Disk in channel 1 (Internal) changed state from RESYNC to ONLINE. 14 Jan 2019 18:14:43 System: ReadyNASOS background service started. 14 Jan 2019 18:14:32 Volume: Resyncing started for Volume data. 14 Jan 2019 18:14:32 Volume: Volume data is Degraded. 14 Jan 2019 18:11:31 System: The system is rebooting.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104: Disk failed, replaced, resync, data still degraded, NAS reboot = repeated resync problem

Can you check the SMART stats for both disks? Perhaps also copy/paste mdstat.log here (you can use the "insert code" tool for that).

Also, what firmware are you running?

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104: Disk failed, replaced, resync, data still degraded, NAS reboot = repeated resync problem

Hi again,thanks for your reply

I have the newest firmware version (6.9.4 Hotfix 1)

Below the mdstat.log

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md127 : active raid1 sda3[0] sdb3[2](S)

3902166784 blocks super 1.2 [2/1] [U_]

bitmap: 17/30 pages [68KB], 65536KB chunk

md1 : active raid1 sda2[0] sdb2[1]

523712 blocks super 1.2 [2/2] [UU]

md0 : active raid1 sda1[3] sdb1[2]

4190208 blocks super 1.2 [2/2] [UU]

unused devices: <none>

/dev/md/0:

Version : 1.2

Creation Time : Wed Apr 15 05:15:17 2015

Raid Level : raid1

Array Size : 4190208 (4.00 GiB 4.29 GB)

Used Dev Size : 4190208 (4.00 GiB 4.29 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Jan 16 18:32:20 2019

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Name : 2fe4f60e:0 (local to host 2fe4f60e)

UUID : b939f237:dcc8fe34:e975d6b5:7b3f6c4e

Events : 57378

Number Major Minor RaidDevice State

3 8 1 0 active sync /dev/sda1

2 8 17 1 active sync /dev/sdb1

/dev/md/1:

Version : 1.2

Creation Time : Sat Jan 12 12:25:30 2019

Raid Level : raid1

Array Size : 523712 (511.44 MiB 536.28 MB)

Used Dev Size : 523712 (511.44 MiB 536.28 MB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Jan 16 18:30:00 2019

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Name : 2fe4f60e:1 (local to host 2fe4f60e)

UUID : 6eb0e2e7:ac8458bc:f4b8df87:ff5ca7fb

Events : 19

Number Major Minor RaidDevice State

0 8 2 0 active sync /dev/sda2

1 8 18 1 active sync /dev/sdb2

/dev/md/data-0:

Version : 1.2

Creation Time : Wed Apr 15 05:15:17 2015

Raid Level : raid1

Array Size : 3902166784 (3721.40 GiB 3995.82 GB)

Used Dev Size : 3902166784 (3721.40 GiB 3995.82 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Intent Bitmap : Internal

Update Time : Tue Jan 15 19:54:27 2019

State : clean, degraded

Active Devices : 1

Working Devices : 2

Failed Devices : 0

Spare Devices : 1

Name : 2fe4f60e:data-0 (local to host 2fe4f60e)

UUID : f499f3cd:f84f401f:f890486d:667c8673

Events : 30171

Number Major Minor RaidDevice State

0 8 3 0 active sync /dev/sda3

- 0 0 1 removed

2 8 19 - spare /dev/sdb3

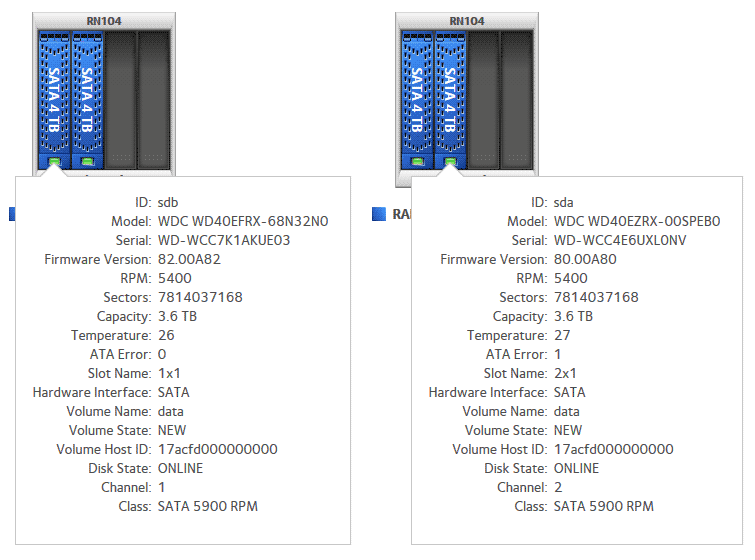

and disk_info.log

Device: sdb Controller: 0 Channel: 0 Model: WDC WD40EFRX-68N32N0 Serial: WD-WCC7K1AKUE03 Firmware: 82.00A82 Class: SATA RPM: 5400 Sectors: 7814037168 Pool: data PoolType: RAID 1 PoolState: 3 PoolHostId: 2fe4f60e Health data ATA Error Count: 0 Reallocated Sectors: 0 Reallocation Events: 0 Spin Retry Count: 0 Current Pending Sector Count: 0 Uncorrectable Sector Count: 0 Temperature: 26 Start/Stop Count: 13 Power-On Hours: 102 Power Cycle Count: 1 Load Cycle Count: 13 Device: sda Controller: 0 Channel: 1 Model: WDC WD40EZRX-00SPEB0 Serial: WD-WCC4E6UXL0NV Firmware: 80.00A80 Class: SATA RPM: 5400 Sectors: 7814037168 Pool: data PoolType: RAID 1 PoolState: 3 PoolHostId: 2fe4f60e Health data ATA Error Count: 1 Reallocated Sectors: 0 Reallocation Events: 0 Spin Retry Count: 0 Current Pending Sector Count: 4 Uncorrectable Sector Count: 3 Temperature: 28 Start/Stop Count: 9396 Power-On Hours: 27349 Power Cycle Count: 1397 Load Cycle Count: 1500666

hope this makes some sense ![]()

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104: Disk failed, replaced, resync, data still degraded, NAS reboot = repeated resync problem

Hi again,

My reply has vanished - i don't see it, so i am posting again:

I have the latest firmware (6.9.4 Hotfix 1), the disk status below:

and the mdstat.log:

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md127 : active raid1 sda3[0] sdb3[2](S)

3902166784 blocks super 1.2 [2/1] [U_]

bitmap: 17/30 pages [68KB], 65536KB chunk

md1 : active raid1 sda2[0] sdb2[1]

523712 blocks super 1.2 [2/2] [UU]

md0 : active raid1 sda1[3] sdb1[2]

4190208 blocks super 1.2 [2/2] [UU]

unused devices: <none>

/dev/md/0:

Version : 1.2

Creation Time : Wed Apr 15 05:15:17 2015

Raid Level : raid1

Array Size : 4190208 (4.00 GiB 4.29 GB)

Used Dev Size : 4190208 (4.00 GiB 4.29 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Jan 16 18:32:20 2019

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Name : 2fe4f60e:0 (local to host 2fe4f60e)

UUID : b939f237:dcc8fe34:e975d6b5:7b3f6c4e

Events : 57378

Number Major Minor RaidDevice State

3 8 1 0 active sync /dev/sda1

2 8 17 1 active sync /dev/sdb1

/dev/md/1:

Version : 1.2

Creation Time : Sat Jan 12 12:25:30 2019

Raid Level : raid1

Array Size : 523712 (511.44 MiB 536.28 MB)

Used Dev Size : 523712 (511.44 MiB 536.28 MB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Jan 16 18:30:00 2019

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Name : 2fe4f60e:1 (local to host 2fe4f60e)

UUID : 6eb0e2e7:ac8458bc:f4b8df87:ff5ca7fb

Events : 19

Number Major Minor RaidDevice State

0 8 2 0 active sync /dev/sda2

1 8 18 1 active sync /dev/sdb2

/dev/md/data-0:

Version : 1.2

Creation Time : Wed Apr 15 05:15:17 2015

Raid Level : raid1

Array Size : 3902166784 (3721.40 GiB 3995.82 GB)

Used Dev Size : 3902166784 (3721.40 GiB 3995.82 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Intent Bitmap : Internal

Update Time : Tue Jan 15 19:54:27 2019

State : clean, degraded

Active Devices : 1

Working Devices : 2

Failed Devices : 0

Spare Devices : 1

Name : 2fe4f60e:data-0 (local to host 2fe4f60e)

UUID : f499f3cd:f84f401f:f890486d:667c8673

Events : 30171

Number Major Minor RaidDevice State

0 8 3 0 active sync /dev/sda3

- 0 0 1 removed

2 8 19 - spare /dev/sdb3

disk_info.log:

Device: sdb Controller: 0 Channel: 0 Model: WDC WD40EFRX-68N32N0 Serial: WD-WCC7K1AKUE03 Firmware: 82.00A82 Class: SATA RPM: 5400 Sectors: 7814037168 Pool: data PoolType: RAID 1 PoolState: 3 PoolHostId: 2fe4f60e Health data ATA Error Count: 0 Reallocated Sectors: 0 Reallocation Events: 0 Spin Retry Count: 0 Current Pending Sector Count: 0 Uncorrectable Sector Count: 0 Temperature: 26 Start/Stop Count: 13 Power-On Hours: 102 Power Cycle Count: 1 Load Cycle Count: 13 Device: sda Controller: 0 Channel: 1 Model: WDC WD40EZRX-00SPEB0 Serial: WD-WCC4E6UXL0NV Firmware: 80.00A80 Class: SATA RPM: 5400 Sectors: 7814037168 Pool: data PoolType: RAID 1 PoolState: 3 PoolHostId: 2fe4f60e Health data ATA Error Count: 1 Reallocated Sectors: 0 Reallocation Events: 0 Spin Retry Count: 0 Current Pending Sector Count: 4 Uncorrectable Sector Count: 3 Temperature: 28 Start/Stop Count: 9396 Power-On Hours: 27349 Power Cycle Count: 1397 Load Cycle Count: 1500666

i hope thismakes some sense ![]()

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104: Disk failed, replaced, resync, data still degraded, NAS reboot = repeated resync problem

Firmware 6.9.4 Hotfix 1

mdstat.log:

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md127 : active raid1 sda3[0] sdb3[2](S)

3902166784 blocks super 1.2 [2/1] [U_]

bitmap: 17/30 pages [68KB], 65536KB chunk

md1 : active raid1 sda2[0] sdb2[1]

523712 blocks super 1.2 [2/2] [UU]

md0 : active raid1 sda1[3] sdb1[2]

4190208 blocks super 1.2 [2/2] [UU]

unused devices: <none>

/dev/md/0:

Version : 1.2

Creation Time : Wed Apr 15 05:15:17 2015

Raid Level : raid1

Array Size : 4190208 (4.00 GiB 4.29 GB)

Used Dev Size : 4190208 (4.00 GiB 4.29 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Jan 16 18:32:20 2019

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Name : 2fe4f60e:0 (local to host 2fe4f60e)

UUID : b939f237:dcc8fe34:e975d6b5:7b3f6c4e

Events : 57378

Number Major Minor RaidDevice State

3 8 1 0 active sync /dev/sda1

2 8 17 1 active sync /dev/sdb1

/dev/md/1:

Version : 1.2

Creation Time : Sat Jan 12 12:25:30 2019

Raid Level : raid1

Array Size : 523712 (511.44 MiB 536.28 MB)

Used Dev Size : 523712 (511.44 MiB 536.28 MB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Jan 16 18:30:00 2019

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Name : 2fe4f60e:1 (local to host 2fe4f60e)

UUID : 6eb0e2e7:ac8458bc:f4b8df87:ff5ca7fb

Events : 19

Number Major Minor RaidDevice State

0 8 2 0 active sync /dev/sda2

1 8 18 1 active sync /dev/sdb2

/dev/md/data-0:

Version : 1.2

Creation Time : Wed Apr 15 05:15:17 2015

Raid Level : raid1

Array Size : 3902166784 (3721.40 GiB 3995.82 GB)

Used Dev Size : 3902166784 (3721.40 GiB 3995.82 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Intent Bitmap : Internal

Update Time : Tue Jan 15 19:54:27 2019

State : clean, degraded

Active Devices : 1

Working Devices : 2

Failed Devices : 0

Spare Devices : 1

Name : 2fe4f60e:data-0 (local to host 2fe4f60e)

UUID : f499f3cd:f84f401f:f890486d:667c8673

Events : 30171

Number Major Minor RaidDevice State

0 8 3 0 active sync /dev/sda3

- 0 0 1 removed

2 8 19 - spare /dev/sdb3

disk_info.log:

Device: sdb Controller: 0 Channel: 0 Model: WDC WD40EFRX-68N32N0 Serial: WD-WCC7K1AKUE03 Firmware: 82.00A82 Class: SATA RPM: 5400 Sectors: 7814037168 Pool: data PoolType: RAID 1 PoolState: 3 PoolHostId: 2fe4f60e Health data ATA Error Count: 0 Reallocated Sectors: 0 Reallocation Events: 0 Spin Retry Count: 0 Current Pending Sector Count: 0 Uncorrectable Sector Count: 0 Temperature: 26 Start/Stop Count: 13 Power-On Hours: 102 Power Cycle Count: 1 Load Cycle Count: 13 Device: sda Controller: 0 Channel: 1 Model: WDC WD40EZRX-00SPEB0 Serial: WD-WCC4E6UXL0NV Firmware: 80.00A80 Class: SATA RPM: 5400 Sectors: 7814037168 Pool: data PoolType: RAID 1 PoolState: 3 PoolHostId: 2fe4f60e Health data ATA Error Count: 1 Reallocated Sectors: 0 Reallocation Events: 0 Spin Retry Count: 0 Current Pending Sector Count: 4 Uncorrectable Sector Count: 3 Temperature: 28 Start/Stop Count: 9396 Power-On Hours: 27349 Power Cycle Count: 1397 Load Cycle Count: 1500666

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104: Disk failed, replaced, resync, data still degraded, NAS reboot = repeated resync problem

Hi,

Thanks for the reply, i am using the latest firmware 6.9.4. Hotfix 1

mdstat.log

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md127 : active raid1 sda3[0] sdb3[2](S)

3902166784 blocks super 1.2 [2/1] [U_]

bitmap: 17/30 pages [68KB], 65536KB chunk

md1 : active raid1 sda2[0] sdb2[1]

523712 blocks super 1.2 [2/2] [UU]

md0 : active raid1 sda1[3] sdb1[2]

4190208 blocks super 1.2 [2/2] [UU]

unused devices: <none>

/dev/md/0:

Version : 1.2

Creation Time : Wed Apr 15 05:15:17 2015

Raid Level : raid1

Array Size : 4190208 (4.00 GiB 4.29 GB)

Used Dev Size : 4190208 (4.00 GiB 4.29 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Jan 16 18:32:20 2019

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Name : 2fe4f60e:0 (local to host 2fe4f60e)

UUID : b939f237:dcc8fe34:e975d6b5:7b3f6c4e

Events : 57378

Number Major Minor RaidDevice State

3 8 1 0 active sync /dev/sda1

2 8 17 1 active sync /dev/sdb1

/dev/md/1:

Version : 1.2

Creation Time : Sat Jan 12 12:25:30 2019

Raid Level : raid1

Array Size : 523712 (511.44 MiB 536.28 MB)

Used Dev Size : 523712 (511.44 MiB 536.28 MB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Jan 16 18:30:00 2019

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Name : 2fe4f60e:1 (local to host 2fe4f60e)

UUID : 6eb0e2e7:ac8458bc:f4b8df87:ff5ca7fb

Events : 19

Number Major Minor RaidDevice State

0 8 2 0 active sync /dev/sda2

1 8 18 1 active sync /dev/sdb2

/dev/md/data-0:

Version : 1.2

Creation Time : Wed Apr 15 05:15:17 2015

Raid Level : raid1

Array Size : 3902166784 (3721.40 GiB 3995.82 GB)

Used Dev Size : 3902166784 (3721.40 GiB 3995.82 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Intent Bitmap : Internal

Update Time : Tue Jan 15 19:54:27 2019

State : clean, degraded

Active Devices : 1

Working Devices : 2

Failed Devices : 0

Spare Devices : 1

Name : 2fe4f60e:data-0 (local to host 2fe4f60e)

UUID : f499f3cd:f84f401f:f890486d:667c8673

Events : 30171

Number Major Minor RaidDevice State

0 8 3 0 active sync /dev/sda3

- 0 0 1 removed

2 8 19 - spare /dev/sdb3

disk_info.log

Device: sdb Controller: 0 Channel: 0 Model: WDC WD40EFRX-68N32N0 Serial: WD-WCC7K1AKUE03 Firmware: 82.00A82 Class: SATA RPM: 5400 Sectors: 7814037168 Pool: data PoolType: RAID 1 PoolState: 3 PoolHostId: 2fe4f60e Health data ATA Error Count: 0 Reallocated Sectors: 0 Reallocation Events: 0 Spin Retry Count: 0 Current Pending Sector Count: 0 Uncorrectable Sector Count: 0 Temperature: 26 Start/Stop Count: 13 Power-On Hours: 102 Power Cycle Count: 1 Load Cycle Count: 13 Device: sda Controller: 0 Channel: 1 Model: WDC WD40EZRX-00SPEB0 Serial: WD-WCC4E6UXL0NV Firmware: 80.00A80 Class: SATA RPM: 5400 Sectors: 7814037168 Pool: data PoolType: RAID 1 PoolState: 3 PoolHostId: 2fe4f60e Health data ATA Error Count: 1 Reallocated Sectors: 0 Reallocation Events: 0 Spin Retry Count: 0 Current Pending Sector Count: 4 Uncorrectable Sector Count: 3 Temperature: 28 Start/Stop Count: 9396 Power-On Hours: 27349 Power Cycle Count: 1397 Load Cycle Count: 1500666

hope this makes any sense 🙂