- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

RN104 degraded, but no prorgess

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all.

I am using my RN104 as a backup device sonce a very, very long time w/o any issues... Cool thing, not high performance, but good it works :-).

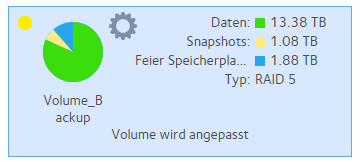

I changed in my RN104 one of the 4 harddrives. Before I had 4x 4TB WD red harddrives. I exchanged a old one some days/weeks ago (regular exchange without failiure). Now I have the following setup:

1x WD80EFBX; 2x WD60EFAX; 1x WD60EFRX

The front display shows "DEGRADED" error message - same on the web frontend, but no progress.

"green" traffic light on all 4 devices

mdadm --detail /dev/md127 shows "degraded", but I do not know why and I have no idea how that happend (is ithis normal) or any idea how to fix that?

Any proposal is welcome ![]() ,

,

Thanks. Norbert

checking /proc/mdstat shows no progress:

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md126 : active raid5 sda3[5] sdc3[3] sdd3[6] sdb3[4]

11706503424 blocks super 1.2 level 5, 64k chunk, algorithm 2 [4/4] [UUUU]

md127 : active raid5 sdc4[1] sda4[3] sdb4[2]

5860115712 blocks super 1.2 level 5, 64k chunk, algorithm 2 [4/3] [_UUU]

md1 : active raid10 sda2[0] sdd2[3] sdc2[2] sdb2[1]

1044480 blocks super 1.2 512K chunks 2 near-copies [4/4] [UUUU]

md0 : active raid1 sda1[5] sdc1[3] sdd1[6] sdb1[4]

4190208 blocks super 1.2 [4/4] [UUUU]

unused devices: <none>

/var/log# mdadm --detail /dev/md126

/dev/md126:

Version : 1.2

Creation Time : Fri Nov 1 10:17:34 2019

Raid Level : raid5

Array Size : 11706503424 (11164.19 GiB 11987.46 GB)

Used Dev Size : 3902167808 (3721.40 GiB 3995.82 GB)

Raid Devices : 4

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Fri Nov 5 20:15:36 2021

State : clean

Active Devices : 4

Working Devices : 4

Failed Devices : 0

Spare Devices : 0

Layout : left-symmetric

Chunk Size : 64K

Consistency Policy : unknown

Name : 0e349ed8:Volume_Backup-0 (local to host 0e349ed8)

UUID : a5655641:0de3a15d:04ae0e91:b294b7d0

Events : 235152

Number Major Minor RaidDevice State

5 8 3 0 active sync /dev/sda3

4 8 19 1 active sync /dev/sdb3

6 8 51 2 active sync /dev/sdd3

3 8 35 3 active sync /dev/sdc3

/dev/md127:

Version : 1.2

Creation Time : Fri Nov 15 09:24:58 2019

Raid Level : raid5

Array Size : 5860115712 (5588.64 GiB 6000.76 GB)

Used Dev Size : 1953371904 (1862.88 GiB 2000.25 GB)

Raid Devices : 4

Total Devices : 3

Persistence : Superblock is persistent

Update Time : Fri Nov 5 20:15:55 2021

State : clean, degraded

Active Devices : 3

Working Devices : 3

Failed Devices : 0

Spare Devices : 0

Layout : left-symmetric

Chunk Size : 64K

Consistency Policy : unknown

Name : 0e349ed8:Volume_Backup-1 (local to host 0e349ed8)

UUID : 31979138:f23cfef8:dca0bbed:4e1804e3

Events : 113565

Number Major Minor RaidDevice State

- 0 0 0 removed

1 8 36 1 active sync /dev/sdc4

2 8 20 2 active sync /dev/sdb4

3 8 4 3 active sync /dev/sda4

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I agree it doesn't look like it's expanding. First, make sure a 4th partition was created: fdisk -l /dev/sdd. You can also compare the size of the 4th partition to another drive's: fdisk -l /dev/sdc. If the partition is there and the right size, then the following should add it or give you an error message as to why it won't: mdadm /dev/md127 --add /dev/sdd4 --verbose.

If the partition isn't there, don't try to use fdisk to create it, it'll refuse to start it in the right place. I recommend you install and use parted for that.

All Replies

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

The array is degraded because you replaced a drive. It will remain degraded until the resync completes.

"Degraded" means that there is no RAID redundancy, so if one of the original disks fails the volume will be lost.

@XAffi wrote:

2x WD60EFAX;

The WD60EFAX drives use SMR technology. Several folks here have found they misbehave in OS-6 ReadyNAS. SMR drives have variable write speeds (sustained writes can result in glacial speeds).

Generally I don't recommend them for any NAS - not saying you should replace them, but I do suggest keeping an eye out for problems.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

Hi Stephan.

Thanks for your reply.

1. "SMR-Drives"--- I have them since almost 2 years now and yes, I am in the progress of replacing them step-by-step - the one I replaced was also an "SMR".

2. Is resync really running? Usually I see it in the /proc/mdstat. Any proposal where I can see if resync is running if it is not shown on the readynas itself, the web-interface or in the /proc/mdstat file?

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

@XAffi wrote:

2. Is resync really running? Usually I see it in the /proc/mdstat. Any proposal where I can see if resync is running if it is not shown on the readynas itself, the web-interface or in the /proc/mdstat file?

That's a good question. Usually it is shown on the volume page. Your mdstat post shows sdd is missing from md127 (but it is in the md126 raid group).

What drive did you recently replace?

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I agree it doesn't look like it's expanding. First, make sure a 4th partition was created: fdisk -l /dev/sdd. You can also compare the size of the 4th partition to another drive's: fdisk -l /dev/sdc. If the partition is there and the right size, then the following should add it or give you an error message as to why it won't: mdadm /dev/md127 --add /dev/sdd4 --verbose.

If the partition isn't there, don't try to use fdisk to create it, it'll refuse to start it in the right place. I recommend you install and use parted for that.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

I replaced 1x WD60EFAX with 1x WD80EFBX...

And yes, I know the difference between md126 and m127...Any idea?

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

@XAffi wrote:

I replaced 1x WD60EFAX with 1x WD80EFBX...

So that should be sda.

@XAffi wrote:

I know the difference between md126 and m127...Any idea?

The puzzle is why sdd is missing from md127. Replacing sda worked, so it must have dropped out after the resync the WD80EFBX completed.

Have you tried rebooting the NAS?

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

Hi Sandshark,

Thanks.. Meaning you assumed that due to whatever reason resync has not started by itself...

I have checked ans seen that all partitions are available, size matches as well...

I will follow closely...but it seems it is working...

fdisk -l /dev/sdd

Disk /dev/sdd: 7.3 TiB, 8001563222016 bytes, 15628053168 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 4096 bytes

I/O size (minimum/optimal): 4096 bytes / 4096 bytes

Disklabel type: gpt

Disk identifier: E93A4BDA-2E80-4CE4-9C98-3E82A6477A2F

Device Start End Sectors Size Type

/dev/sdd1 64 8388671 8388608 4G Linux RAID

/dev/sdd2 8388672 9437247 1048576 512M Linux RAID

/dev/sdd3 9437248 7814037119 7804599872 3.6T Linux RAID

/dev/sdd4 7814037120 11721045119 3907008000 1.8T Linux RAID

fdisk -l /dev/sdc

Disk /dev/sdc: 5.5 TiB, 6001175126016 bytes, 11721045168 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 4096 bytes

I/O size (minimum/optimal): 4096 bytes / 4096 bytes

Disklabel type: gpt

Disk identifier: 328949D9-CF99-4854-BAA8-3FD7BCFE4E4C

Device Start End Sectors Size Type

/dev/sdc1 64 8388671 8388608 4G Linux RAID

/dev/sdc2 8388672 9437247 1048576 512M Linux RAID

/dev/sdc3 9437248 7814037119 7804599872 3.6T Linux RAID

/dev/sdc4 7814037120 11721045119 3907008000 1.8T Linux RAID

root@Saturn:~# mdadm /dev/md127 --add /dev/sdd4 --verbose

mdadm: added /dev/sdd4

cat /proc/mdstat

Personalities : [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md126 : active raid5 sda3[5] sdc3[3] sdd3[6] sdb3[4]

11706503424 blocks super 1.2 level 5, 64k chunk, algorithm 2 [4/4] [UUUU]

md127 : active raid5 sdd4[4] sdc4[1] sda4[3] sdb4[2]

5860115712 blocks super 1.2 level 5, 64k chunk, algorithm 2 [4/3] [_UUU]

[>....................] recovery = 0.0% (647904/1953371904) finish=5449.9min speed=5971K/sec

md1 : active raid10 sda2[0] sdd2[3] sdc2[2] sdb2[1]

1044480 blocks super 1.2 512K chunks 2 near-copies [4/4] [UUUU]

md0 : active raid1 sda1[5] sdc1[3] sdd1[6] sdb1[4]

4190208 blocks super 1.2 [4/4] [UUUU]

unused devices: <none>

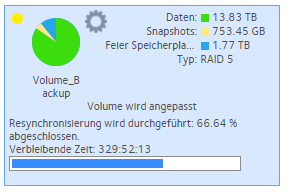

And finally:

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

It was clear from the mdstat that the partition never got added to the volume, which would have then started the re-sync. The only question was why, and we still don't know. But I agree that manually doing so seems to have done the trick. But keep a lookout in case it fails somewhere along the way.

For whatever reason, the ReadyNASOS seems to increase the number of drives in an array before it adds a device to fill the empty slot. It is much more common to first add the device (so it becomes a spare), then change the number of devices (which will automatially start integrating the spare into the RAID).

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

Sandshark, I will follow, but for the time being things are running now as expected... Thanks again. Honnestly the RN104 is already a liittle bit older, and I am happy adding an 8TB drive is working at all... Latest next week resync shall be done.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

Hi Stephan,

Thanks for your effort.

The newly added WD80EFBX is sdd - all other drives show 5.5 TiB:

fdisk -l /dev/sdd

Disk /dev/sdd: 7.3 TiB, 8001563222016 bytes, 15628053168 sectors

And a reboot has not fixed anything before. I followed the manual approach as posted and in a couple of days I will see if this was the magic trick.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: RN104 degraded, but no prorgess

Quick/Last feedback...

RESYNC finished successfully.. Thanks.

Norbert